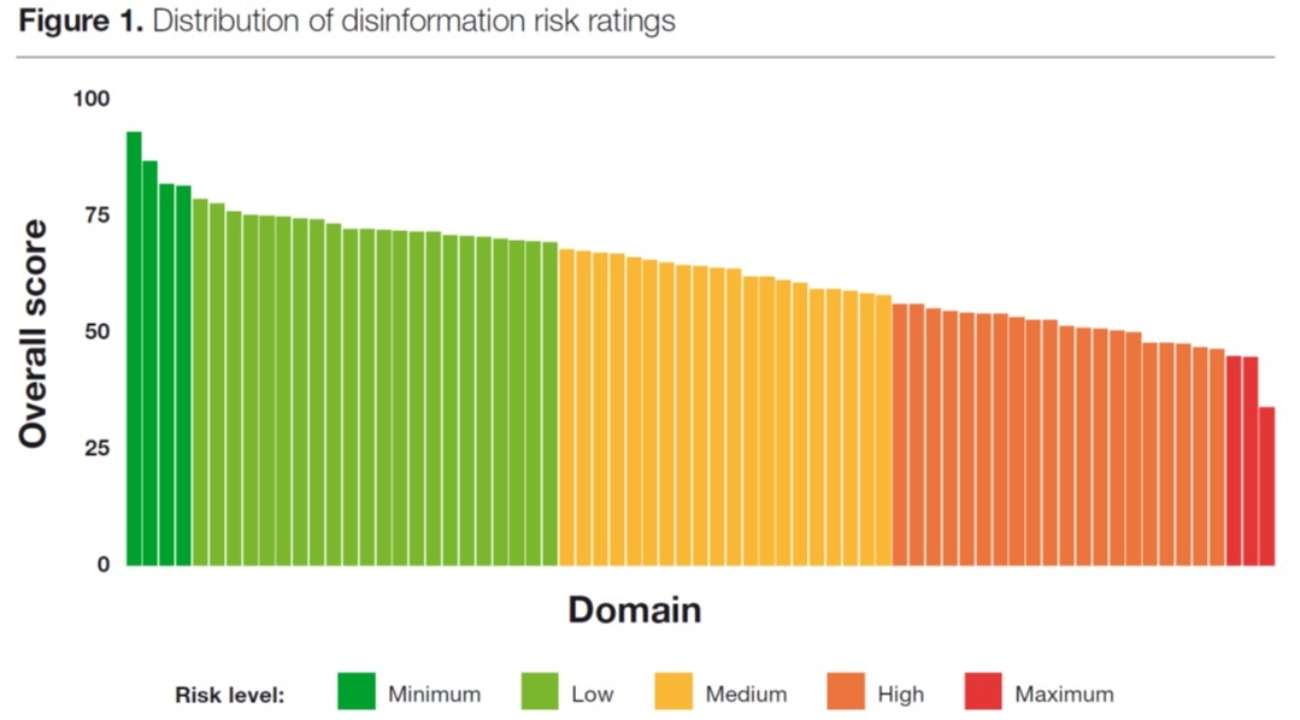

The Global Disinformation Index (GDI) is a British organization that evaluates news outlets' susceptibility to disinformation. The ultimate aim is to persuade online advertisers to blacklist dangerous publications and websites.

...

GDI's recent report on disinformation notes that the organization exists to help "advertisers and the ad tech industry in assessing the reputational and brand risk when advertising with online media outlets and to help them avoid financially supporting disinformation online."

The U.S. government evidently values this work; in fact, the State Department subsidizes it. The National Endowment for Democracy—a nonprofit that has received $330 million in taxpayer dollars from the State Department—contributed hundreds of thousands of dollars to GDI's budget, according to an investigation by The Washington Examiner's Gabe Kaminsky.

Should the State Department spend public money to help an organization pressure advertisers to punish U.S. media companies? The answer, quite obviously, is no: The First Amendment prohibits the U.S. government from censoring private companies for good reason, and government actors should not seek to evade the First Amendment's protections in order to censor indirectly or exert pressure inappropriately.

...

More:

U.S. State Department funds a disinformation index that warns advertisers to avoid ‘Reason’

'Reason' magazine is listed among the "ten riskiest online news outlets" by a government-funded disinfo tracker.

Related articles (including news about Microsoft dumping GDI after The Washington Examiner's reporting broke):