NCLA Files Class-Action Against Massachusetts for Auto-Installing Covid Spyware on 1 Million Phones

Nov 15, 2022

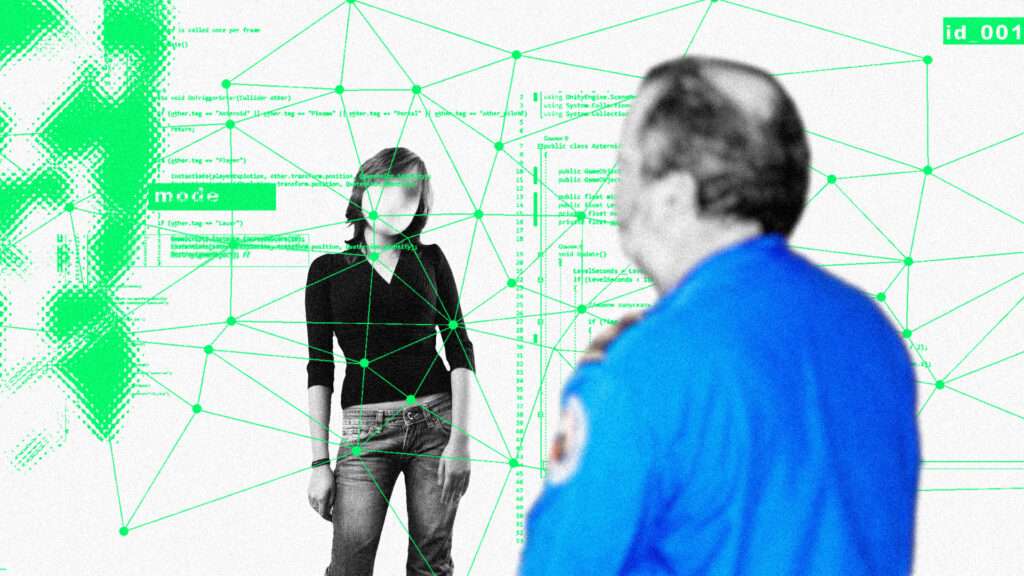

Washington, DC (November 15, 2022) – The Massachusetts Department of Public Health (DPH) worked with Google to auto-install spyware on the smartphones of more than one million Commonwealth residents, without their knowledge or consent, in a misguided effort to combat Covid-19. Such brazen disregard for civil liberties violates the United States and Massachusetts Constitutions and cannot stand. The New Civil Liberties Alliance, a nonpartisan, nonprofit civil rights group, has filed a

class-action lawsuit,

Wright v. Massachusetts Department of Public Health, et al., challenging DPH’s covert installation of a Covid tracing app that tracks and records the movement and personal contacts of Android mobile device users without owners’ permission or awareness.

Plaintiffs Robert Wright and Johnny Kula own and use Android mobile devices and live or work in Massachusetts. Since June 15, 2021, DPH has worked with Google to secretly install the app onto over one million Android mobile devices located in Massachusetts without obtaining any search warrants, in violation of the device owners’ constitutional and common-law rights to privacy and property. Plaintiffs have constitutionally protected liberty interests in not having their whereabouts and contacts surveilled, recorded, and broadcasted, and in preventing unauthorized and unconsented access to their personal smartphones by government agencies.

Once “

automatically installed,” DPH’s contact tracing app does not appear alongside other apps on the Android device’s home screen. The app can be found only by opening “settings” and using the “view all apps” feature. Thus, the typical device owner remains unaware of its presence. DPH apparently decided to secretly install the contact tracing app onto over one million Android devices because few Massachusetts citizens were downloading its initial version, which required voluntary adoption. DPH decided to mass-install the app without device owners’ knowledge or consent. When smartphone owners delete the app, DPH simply re-installs it. Plaintiffs’ class-action lawsuit contains nine counts against DPH, including violations of their Fourth and Fifth Amendment rights under the U.S. Constitution, and violations of Articles X and XIV of the Massachusetts Declaration of Rights.

No statutory authority supports DPH’s conduct, which serves no public health purpose, especially since Massachusetts has ended its statewide contact-tracing program. No law or regulation authorizes DPH to secretly install any type of software—let alone what amounts to spyware designed specifically to obtain private location and health information—onto the Android devices of Massachusetts residents. The U.S. District Court for the District of Massachusetts should grant injunctive relief, along with nominal damages, to the class. NCLA is unaware at this time of other states that engaged in a similar surreptitious strategy of auto-installing contact-tracing apps. It appears Massachusetts iPhone users had to consent before a similar app installed on their devices.

NCLA released the following statements:

“Many states and foreign countries have successfully deployed contact tracing apps by obtaining the consent of their citizens before downloading software onto their smartphones. Persuading the public to voluntarily adopt such apps may be difficult, but it is also necessary in a free society. The government may not secretly install surveillance devices on your personal property without a warrant—even for a laudable purpose. For the same reason, it may not install surveillance software on your smartphone without your awareness and permission.”

— Sheng Li, Litigation Counsel, NCLA

“The Massachusetts DPH, like any other government actor, is bound by state and federal constitutional and legal constraints on its conduct. This ‘android attack,’ deliberately designed to override the constitutional and legal rights of citizens to be free from government intrusions upon their privacy without their consent, reads like dystopian science fiction—and must be swiftly invalidated by the court.”

— Peggy Little, Senior Litigation Counsel, NCLA

For more information visit the case page here.